Editor’s note: This is the first in a two-part series by Kate Robinson on the use and misuse of public opinion polling today. To be prepared for that call from the National Rifle Association, the Pew Research Center or Rasmussen Reports, check out Robinson’s second story, “Polling: A guide to what you need to know when you pick up the phone.”

Fewer and fewer politicians, if the proliferation of political polls is any guide, believe the old saw that “the only poll that matters is the one on Election Day.”

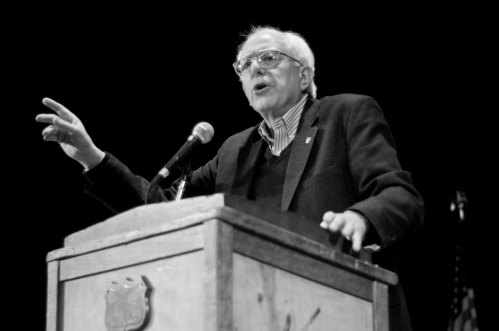

Perhaps they shouldn’t. There is an instructive story dating back to August 1990 and the congressional race pitting Bernie Sanders against incumbent Peter Smith. Polls had indicated Smith had a comfortable lead. But then a St. Michael’s College poll conducted for the Burlington Free Press showed the two neck and neck, says University of Vermont political science professor Garrison Nelson. It gave the Sanders campaign a new lease on life. Had it missed his rising popularity, it could have doomed his chances.

Nelson tells with gusto what happened next. The Associated Press, apparently questioning the validity of the poll results, did not put the story out at the top of its wire service feed. Nelson, who had evaluated the poll and found the sampling distribution representative and the questions fair, photocopied the story and faxed it to out-of-state news media. Roll Call published it; David Broder of The Washington Post came to Vermont to do a story.

“Suddenly Sanders looked like a viable candidate and the money started rolling into his coffers,” Nelson says. And we all know how that story ends.

Legitimate, balanced polling does reflect public opinion. What’s often overlooked is that reporting public opinion may then alter it. This is why tracking polls rather than snapshots at only one moment in time are considered the most useful kind of public opinion research.

The campaign season is about to start in earnest, but election polling in Vermont — both the horse-race variety and issues-related — began in February. Vermonters can expect to see some sort of polling right up to the election, with a focus on whatever hot topics candidates choose to woo voters.

Nelson and others note that there is relatively little polling here. Vermont Public Radio and television station WCAX are still considering what and how much polling to do. After all, the cost, at somewhere between $7 and $9 per completed interview for a telephone poll using calling agents is not negligible.

Polling nowadays comes in many forms: person-to-person phone calls, automated phone calls, online surveys, exit polls, robocalls. Public opinion research is commissioned by many organizations and political campaigns. That sponsorship should always be clear when results are reported. Those that are reputable abide by professional standards that require transparency about the methodology used, going far beyond the margin of sampling error statement that is conventionally thought to signal a “scientific poll.”

Poll results can misrepresent public opinion by, for example, bending questions and responses to fit the sponsor’s goals or by using methods of reaching respondents that will not yield a representative respondent group. The media, voters and candidates need more than ever before to know how to gauge how reliable, fair and trustworthy a poll is.

How then to be an educated consumer of the many polls that come our way?

The first thing to know is that there is no real regulation in the industry, only self-regulation. The industry standard bearers, the National Council on Public Polls and the American Association for Public Opinion Research, spell out sound and ethical practices clearly. Members of these organizations pledge to abide by a code of professional ethics with specific standards for transparency about methodology and disclosures of poll content and sponsorship. Yet relatively few polling organizations today are members of these organizations.

Most major polling organizations, from Gallup and Roper to Pew Research, ABC and CBS News, Marist and Princeton Surveys, belong to the NCPP. It sets standards for the industry and has long called for “routine disclosures of methods and data” when poll results are released to the public, according to its website. It calls for publishing “complete wording and ordering of questions mentioned in or upon which the release is based,” because “how questions in a poll are worded is as important as sampling procedure in obtaining valid results.”

The pledge for members of these organizations is that “all reports of survey findings issued for public release by a member organization will include the following information” as basic (“Level 1”) disclosure. There are 11 items, including sponsorship of the survey, dates of interviewing, size of the sample that is the primary basis of the survey report as well as subsample sizes, margin of sampling error (if a probability sample) and “complete wording and ordering of questions mentioned in or upon which the release is based.”

These standards, outside the NCPP membership, are often ignored today.

Polling in Vermont

Vermont-made polls, done by telephone interviewers, have been rare and appear to be becoming rarer. UVM’s Center for Rural Studies does several a year on Vermont topics. VPR and WCAX have commissioned the occasional election-year, issues-related survey.

During the 2010 governor’s race, Vermont Public Radio commissioned a poll from Mason-Dixon Polling gauging opinion on several hot issues — Vermont Yankee relicensing, school district consolidation and health-care reform. VPR.net has the poll here. It is accompanied by a standard short description of the methodology used and provides the complete questionnaire.

With the launch last year of the Castleton Polling Institute, a member of AAPOR, more systematic public opinion research may be in the cards. The institute, at Castleton State College, conducted its first election-related poll in February, just before the Town Meeting Day primary on March 6. Castleton conducts its polls by phone, using student interviewers.

Director Rich Clark says there will be more election polling, but he hopes that funding will also be available for the kinds of “citizen surveys” that provide a scientific method for “benchmarking, assessing progress, measuring satisfaction, and discovering citizen priorities.” They contribute, he feels, to the citizen input in the policy-making process that is important to democracy.

Clark conducted such polls in his previous position at the University of Georgia’s Carl Vinson Institute of Government’s Survey Research department. While there, he started the quarterly Peach State Polls that looked at policy issues directly affecting the lives of Georgians.

The newly launched institute, the first in-state public opinion research organization, interviewed a random sample of 800 Vermont registered voters about 10 days before the Town Meeting Day primary. The horse-race questions were leavened with questions that asked about amending the Constitution to limit campaign spending — an issue that was on the ballot in 40 towns, increasing the governor’s term from two years to four, President Obama’s handling of his job and how respondents might vote in the presidential election.

Vermont’s media outlets reported almost exclusively on who would take the top spots in the Republican primary. The poll gave Mitt Romney the lead, with Rick Santorum in second and Ron Paul a distant third. As it turned out, by March 6 Ron Paul had almost doubled his predicted vote, going from 14 percent to 25.5 percent, and Rick Santorum dropped nearly four points for a third-place finish with just 23.7 percent of the vote.

Clark says he had been concerned about getting only one reading in advance of the primary. “When we were in the field, Santorum was up, but his support had dropped off by the time of the primary vote and our poll had shown that support was soft.”

He points to the response to a question that went unreported by the media showing a strong likelihood that, among respondents who said they would be voting in the primary, substantial numbers — 48 percent of self-identified Republicans and 41 percent of independents — said they were somewhat or very likely to change their vote. Time passed and, indeed, many voters changed their minds.

Also unreported by the media, the Castleton poll showed dissatisfaction with the choices of Republican presidential candidates. Among Republicans, 37 percent said they were somewhat or very dissatisfied and among independents dissatisfaction registered at 62 percent.

Another poll in February, asking about marijuana legislation in Vermont, is the kind of “public opinion research” on issues whose results the commissioning organization, in this case the Marijuana Policy Project (MPP), hope will influence legislation. The MPP commissioned a similar poll in 2010 from Mason-Dixon Polling, which does not, it can be noted, adhere to the AAPOR transparency code. What these polls, which are commissioned by an advocacy organization, can tell us is that those sampled were in favor of legalization by a fairly wide margin. This sample may be representative of the full population, but as the questions don’t put the issue in context, a politician would be taking something of a gamble to accept the poll results as declared by the Marijuana Policy Project.

The two marijuana polls are rare in that they try to track evolving opinion. Other moving targets, such as health care reform, “death with dignity” legislation, environmental policy and the Vermont economy have not been tracked. The University of Vermont’s Center for Rural Studies “Vermonter Poll” varies its topics from year to year — it has examined hunger and food security, the arts, Vermonters’ agricultural I.Q., and computer and Internet connectivity in the state — but doesn’t revisit the issues from year to year.

When polls are sponsored by advocacy organizations, they sometimes do not make this clear when results are published. Usually a margin of sampling error figure declaring a 95 percent confidence level is given. This has become the litmus test of legitimacy for the media and the public, implying, though not in fact substantiating, adherence to transparency in conducting the survey. Without the information the NCPP lists as basic the value of any findings is suspect.

For example, the anti-immigration, nativist organization Federation for American Immigration Reform, or F.A.I.R., which did an automated poll in Vermont in early January and now reports the results on its website, bought the low-cost automated dialing service offered by Pulse Opinion Research (POR). It turns out that this is POR’s only involvement with the poll. Customers such as F.A.I.R. compose their own wording and question order, contrary to the standards established by NCPP and the AAPOR.

The first question was: “Illegal immigration is an important issue nationally and in Vermont. Overall, is the impact of illegal immigration on Vermont extremely negative, somewhat negative, somewhat positive or extremely positive?” The response was reported in a press release as justifying the conclusion that a majority of Vermonters are strongly opposed to illegal immigration with a focus on 55 percent negative, though responses actually showed only 20 percent “strongly negative.”

Results from polls such as this one by an anti-immigration organization do not have any legitimacy unless they are conducted by an independent, standards-based public opinion research organization. The media too often to not vet methodology before broadcasting the “results.”

Of course, claims in press releases about “Vermonters’ opinion” on an issue should be taken with a grain of salt, in any case, because it is in the sponsoring organization’s interest to highlight any favorable poll results and downplay details that do not build their case.